What’s threatening the AR glasses adoption? A display problem story

If you’ve ever tried a VR headset or a pair of AR glasses, you’ve likely experienced eye strain, fatigue, and possibly nausea. While these symptoms are often attributed to motion sickness (an issue specific to VR), there is actually a deeper, underlying problem at play. Let’s explore it.

Back in 2019, market research¹ predicted that HMDs (Head-Mounted Displays, encompassing both VR headsets and AR glasses) would reach mass-market adoption between 2021 and 2025. Yet years later, the reality looks quite different — very few people are wearing these devices in their daily lives. The VR space is currently contracting, with Meta, the dominant VR headset manufacturer, making significant cuts to its VR research division². Meanwhile, new AR glasses initiatives continue to emerge, though at a pace far slower than what was envisioned in 2019.

Several factors underlie the slow development and adoption of AR glasses: form-factor constraints and, critically, the challenge of miniaturizing each component sufficiently to fit into a sleek, fashionable design without compromising performance. While most of these obstacles are gradually being overcome through incremental innovation, one remains entirely unsolved at the product level: AR glasses still cannot deliver a proper visual experience. Marketed as all-day wearables, they will fall short of their growth potential until this fundamental visual experience issue is addressed.

The vergence-accommodation conflict problem

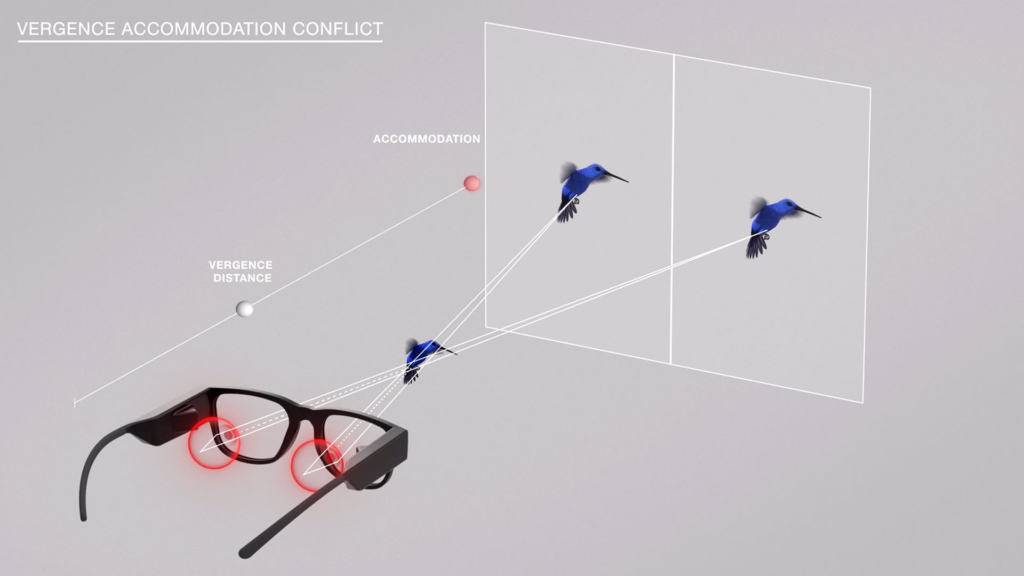

The visual experience is, de facto, linked to a display problem. Today’s AR displays project a flat image (2D) at a fixed distance, much like a TV screen placed at a ~1-meter distance from the viewer. The display projects two images, each with a slightly different perspective, to create the illusion of depth. However, this trick makes the user’s eyes behave in an unnatural way: while they try to converge toward the point where the digital image appears, they also accommodate at a further distance, where the display is projected. This desynchronization between the vergence and the accommodation distance, always synchronized in normal vision, messes with the user’s brain, leading to nausea, eye strain and fatigue. The severity of the symptoms varies by user, but after ~20 minutes of use, most will experience them and will want to remove the device for a much-needed break3.

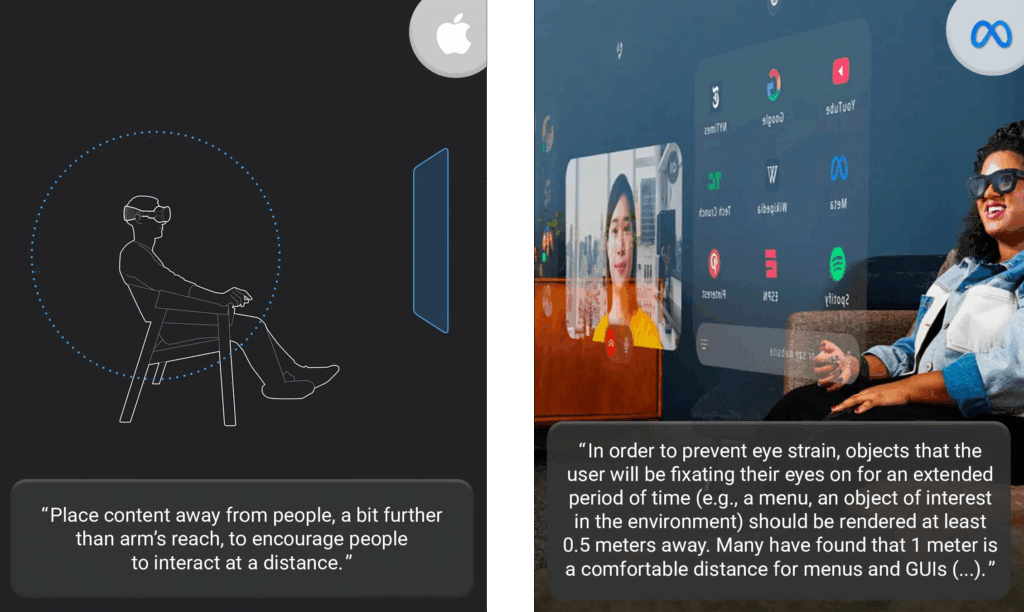

To counter this problem, most AR glasses manufacturers suggest XR interaction designers to place the digital content far (as close as possible to where the image is projected). This way, the delta between the vergence and accommodation distances is reduced or even eliminated. Meta, Apple, Microsoft and Snap have all shared instructions in that sense4.

What about all the interactions happening up close?

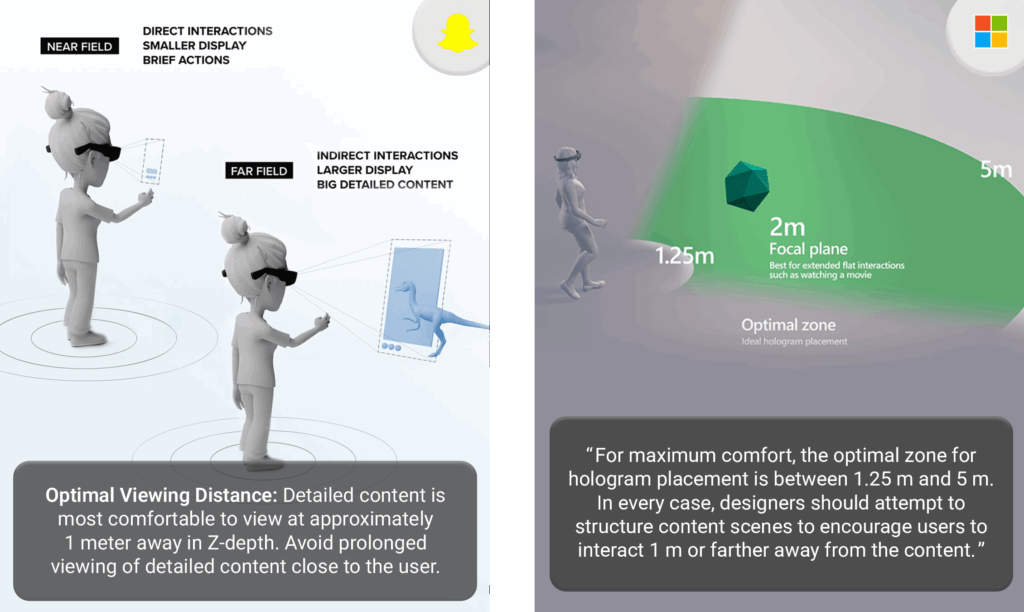

While this design compromise might work for AR applications that ”only” project simple content detached from the real world, such as a few lines of text for live translation or video content, the situation is quite different for contextual AR, where digital elements have to appear in the natural places they belong.

If watching a video projected at 1+ meters away is fine, what about all the interactions happening up close? What about clicking on menus? Reading text, charts? Moving pieces on a board game? If the content is placed at 1+ meters, what would the experience be like, and how would the user’s interaction look from an external perspective? Extending arms straight in front or using hand-gesture control (via a wristband) is either socially problematic or simply unnatural, in contrast to direct manipulation. To ensure the best AR experience, all interactions should happen where they naturally occur in the real world.

From 2D to 3D screens

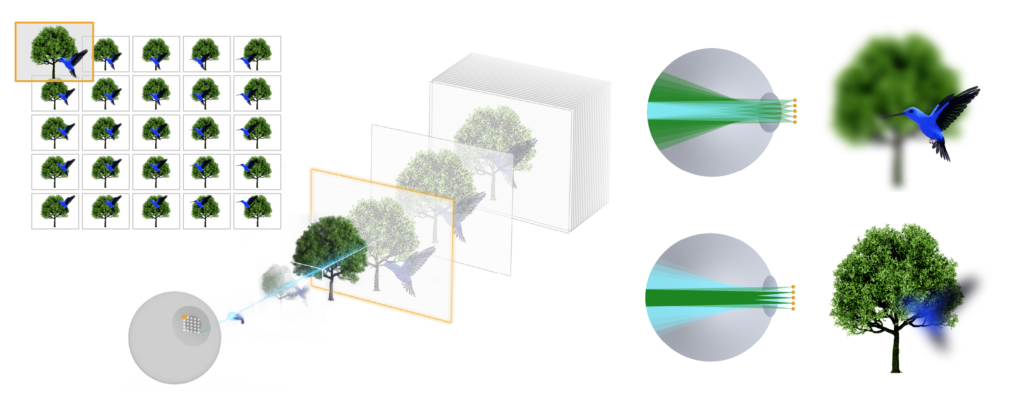

This is why CREAL developed a new display technology that enables a natural visual experience in AR. Developed around the human eye requirements, the display recreates the light field as it exists in the real world, projecting digital content with 3D depth cues. The digital image is no longer flat and projected at a certain distance, but becomes a full 3D scene. The user’s eyes can naturally focus on the digital content at any distance, just as they do in the real world, thereby resolving the vergence-accommodation conflict. Digital objects can be placed at any distance, even within the personal space, ensuring the most natural interactions.

If AR is to become the next computing platform, with users wearing AR glasses for most parts of their day, at home and at work, then those devices need to offer a natural visual experience and a contextual AR. Surely, AR glasses require a great amount of innovation for each component: a battery that lasts long enough, sensors, a miniature camera, etc., all to be fitted into lightweight and fashionable designs. But for the display, the challenge is not only about incremental innovations to make it brighter, more contrasted, or more efficient. It’s about creating a real breakthrough in how to project the image, moving from 2D to 3D. This is the way to widespread AR adoption, and that’s what CREAL is powering.

Sources

1 Gartner, Competitive Landscape: Head-Mounted Displays for Augmented Reality and Virtual Reality, July 2019

2 Wired, Meta Is Shutting Down Horizon Worlds on Meta Quest

3 The Verge, My bad VR trip – WSJ, I Spent 24 Hours Trapped in the Metaverse, Steve Mould – Why 3D sucks – the vergence accommodation conflict